HEALTH CARE PROJECTS

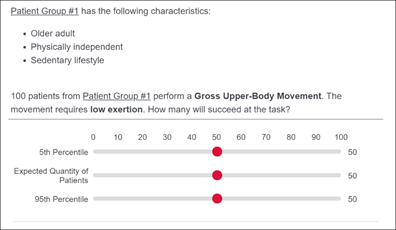

Food and Drug Administration: Expert Elicited Database for Patient Performance

As an alternative to in-person human factors device evaluations, an expert data-driven approach is proposed to predict the device interaction task performance of heterogenous user-groups. This research is particularly relevant as medical device designers devise methods to address COVID-19 restrictions during product development activities that require usability testing. Expert judgement plays an essential role in statistical inference and in supporting evidence-based decision-making, especially when data is sparse and difficult to collect. Performance estimation of generic tasks required for device interaction will be elicited from Internal Medicine experts due to their expertise in assessing cognitive and physical functioning across the human body. This data will be used to simulate the population of interest and to predict the risk of use error for a given device. This research will serve as the foundation to identify critical task failures that do not meet acceptable failure thresholds and focus later stages of the design process on mitigating the risk of those failures for specific user groups.

Food and Drug Administration: Subjective Performance Metric Development and Validation in Simulated Use Tests

This research will address the need to identify reliable human performance outcomes during device design validation activities as part of the medical device development and approval process. FDA’s summative human factors evaluation requires medical device manufacturers to provide evidence that their device is safe and effective through simulated-use testing. However, for non-observable tasks (recalling information, making judgments, etc.), which can dominate device interaction, collecting subjective performance data is particularly challenging and highly susceptible to erroneous reporting. This project will develop and validate a structured methodology to integrate cognitive task decompositions with standardized question syntax. The approach will be empirically validated in a simulated use test on a generic Automatic External Defibrillator. A virtual web interface was developed to perform pilot testing of the methodology while in-person testing is restricted due to COVID-19. Results of this research will inform standardized guidelines for human factors design validation activities.

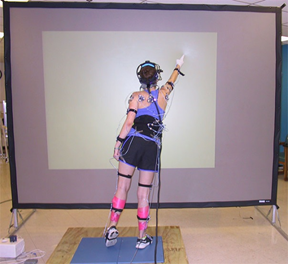

Veteran’s Administration: An Engineering-Based Balance Assessment and Training

The research focuses on the development and validation of a virtual reality (VR) system to aid in balance and reach tests for individuals with compromised motor functioning. The HSIS Lab is involved in the development of virtual reality simulation models to assess balance through the use of test scenarios requiring the user to reach predefined target spheres. This phase of the development includes calibrating the VR subsystem such that the programmed speed and location of the VR sphere coincides with its perceived speed and location in the physical environment.

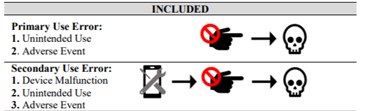

Medical Device Adverse Event Analysis

Use error associated with medical devices can result in catastrophic consequences for end users and inefficient use of healthcare system resources. This study provides a comprehensive evaluation of use error adverse events in the Food and Drug Administration Manufacturer and User Facility Experience (MAUDE) database based on device class, device operator, and event outcome, to address the lack of industry-wide statistics on medical device use error. Results indicate that use error is significantly represented in adverse event reporting. The following questions were posed: 1) How prevalent is use error in adverse event reporting?; 2) How do these values compare for user groups (patient and provider) and high-risk chronic disease groups?; 3) What trends exist for different categories of adverse events?; and 4) How do event outcomes vary across device operators and disease groups? To answer these questions, MAUDE was queried and filtered based on relevant criteria. This work demonstrates the viability of using MAUDE to attain industry wide statistics on medical device use error for later integration in industry-wide or device-specific risk mitigation strategies.

DEFENSE AND ENERGY PROJECTS

Navy Engineering Education Consortium: Empirical Human Performance Modeling for Performance Support Applications

This project will investigate the influence of mixed reality system design specifications on human performance outcomes and cognitive workload for unmanned vehicle controls. A unique experimental facility (UMD’s Virtual Reality CAVE) will be used to immerse participants in multi-modal simulated unmanned control environments, where physical objects will be integrated into a virtual space. The results of this research will inform mixed reality design for control interfaces by reducing the risks associated with cognitive workload and improving system safety. Student engagement is integral to the Navy Engineering Education Consortium goals. This will be accomplished through project integration into a graduate Human Reliability Analysis course and an undergraduate senior design capstone course, among other independent research activities. The ultimate goal is to create a pipeline of students who are trained through formal and hands-on experience to design, evaluate, and implement human-centered systems across the Navy.

Naval Air Systems Command: Human Performance Modeling and Virtual Reality Simulation for UAV Systems The research used empirical human performance modeling and simulation to determine the relationship between neurophysiological predictors of human performance for unmanned aerial vehicle (UAV) operator performance. A Virtual Reality CAVE was used to simulate control and monitoring tasks with varying information visualization scenarios and workstation layouts. In addition, levels of cognitive workload and operator vigilance was be studied. Error classification models were applied to further evaluate the cognitive processes associated with error and propose design risk mitigation strategies. The results of this research provided a basis to inform HSI design and reduce the risks associated with cognitive load, thus improving UAV operator human performance and system safety.

Nuclear Regulatory Commission: Human Reliability Performance Modeling and Experimentation

NRC advocates integrated system validation using performance based tests to determine whether a human-system interface (HSI) meets human performance requirements and supports the plant’s safe operation. However, there is currently a gap empirically linking the human factors control room design guidance to human performance requirements. This research aims to address this gap through empirical studies to evaluate the impact of design elements on the operator while interacting with safety-critical hardware and software interfaces in simulated control room environments.